As @dstrauss pointed out this morning this has been derailing several threads lately so perhaps by actually having a specific place to discuss it, we can avoid or at least reduce some of the walkabouts.

So with that preface, first of all this really is a complex and multifaceted topic. However I will start by stating some general opinions on the topic.

-

Breaches and exploits are becoming, more numerous, more potentially dangerous ,and harder to detect, thus the urgency that some action(s) be taken.

-

The individual users should bear some responsibility in that they, for one thing shouldn’t be knowingly pirating apps or content, and should heed the warnings in all of the major browsers now, when they warn a site/link may be unsafe.

-

OTOH, expecting them to be primarily responsible isn’t the answer either, because for one thing, the exploits and hacks have become far more sophisticated than the user would know how to prevent or deal with, and IMHO they shouldn’t have to either given the role for the vast majority of tech in their lives.

-

Government could and should play a role, but they have been both so ham handed and/or political generally, in the last decade or so that I don’t have a lot of faith at this point, witness the Declaration post from a few days ago. Or for instance the push in the EU to force all the phone makers to USBC.

-

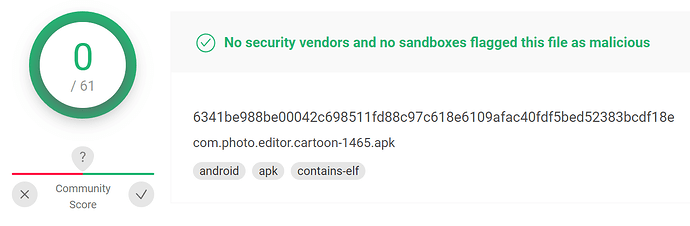

So that leaves the industry and possibly some more robust coordinated industry efforts that members pledge to adhere to. I’m thinking of certain ISO standards for instance. And I think that requiring the App Store as the only method to install an app is problematic at best, though it does provide at least a small amount of protection for instance with Apple’s review process, or Google Play store protect scanning.

I’'ll readily admit they are far from perfect though, and at least to date achieve only “better than nothing at all” status.

Ok, done for the moment but I do look forward to others views and I intend to participate as well.![]()