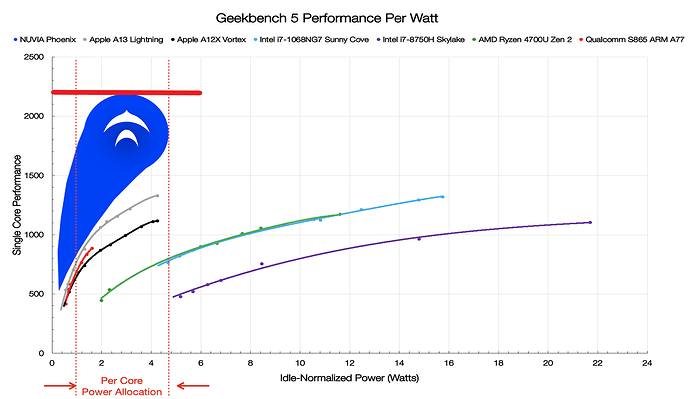

Oh ye of little faith, @dstrauss and @Dellaster. Here is a hint. Ian Cutress at AnandTech already gave us the answer key to this engineering mystery three years ago that most glossed over. In 2020, it was revealed that one of NUVIA’s design objectives was to hit a Geekbench 5 single-threaded score of 2200 at a mere 3W. Get the picture yet? ![]()